10 Risks of Using ArtificiaI Intelligence in Your Subscription Business, Part 1

Artificial intelligence, or AI, is evolving rapidly with new deals, partnerships, applications and headlines popping up daily. It is a powerful tool for increasing productivity, identifying fraud, enhancing discoverability, quality control, and energy management among thousands of other uses. However, using AI comes with notable risks that subscription companies need to be aware of when using artificial intelligence to operate and manage their businesses. In this three-part series, we will look ...

HELLO!

This premium article is exclusively reserved for Subscription Insider PRO members.

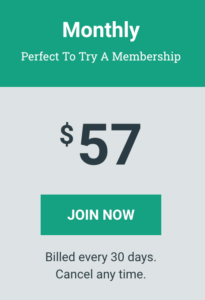

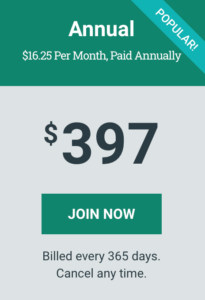

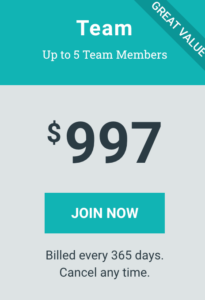

Want access to premium member-only content like this article? Plus, conference discounts and other benefits? We deliver the information you need, for improved decision-making, skills, and subscription business profitability. Check out these membership options!

Learn more about Subscription Insider PRO memberships!

Already a Subscription Insider PRO Member?

Please Log-In Here!

- Filed in AI, Business Services, Customer Service, Subscriber Only, Technology